Focusing behavioural economics on development professionals

The World Development Report offers a useful overview of the way behavioural economics affects the welfare of low-income people around the world. But the most striking part of the report is that it focuses the lens of behavioural economics not just on people in low-income countries, but on development professionals themselves

A torrent of research in economics and psychology in the last quarter-century has focused on looking at how people actually make decisions. As many of us who have vowed to start a diet or get more exercise or save more money can attest, the ways in which real-world people make decisions can get in the way of how we actually behave in accomplishing our goals. The 2015 World Development Report from the World Bank, with the theme of “Mind, Society, and Behaviour,” offers a useful overview of the way in which these issues of "behavioural economics" affect the welfare of low-income people around the world. But at least to me, the single most striking part of the report is that it focuses the lens of behavioural economics not just on people in low-income countries, but also on development professionals themselves.

The first few chapters of the report are organized around three groups of common behavioural biases:

“First, people make most judgments and most choices automatically, not deliberatively: we call this ‘thinking automatically.’ Second, how people act and think often depends on what others around them do and think: we call this ‘thinking socially.’ Third, individuals in a given society share a common perspective on making sense of the world around them and understanding themselves: we call this ‘thinking with mental models.’”

The next six chapters explore how these factors play out in the context of poverty, early childhood development, household finance, productivity, health, and climate change.

This part of the report is full of interesting studies. For example, here is an example about thinking automatically:

Fruit vendors in Chennai, India, provide a particularly vivid example. Each day, the vendors buy fruit on credit to sell during the day. They borrow about 1,000 rupees (the equivalent of $45 in purchasing parity) each morning at the rate of almost 5 percent per day and pay back the funds with interest at the end of the day. By forgoing two cups of tea each day, they could save enough after 90 days to avoid having to borrow and would thus increase their incomes by 40 rupees a day, equivalent to about half a day’s wages. But they do not do that. Thinking as they always do (automatically) rather than deliberatively, the vendors fail to go through the exercise of adding up the small fees incurred over time to make the dollar costs salient enough to warrant consideration.

In this as in many other examples, it can sometimes seems in the behavioural economics literature that people are trapped by their own inconsistencies and unable to accomplish their own desires — unless they are nudged along by assistance from beneficent and well-intended aid of an all-seeing policymaker. But the lessons of behavioural economics apply to everyone, the policymaker as much as the typical citizen. The World Bank report, greatly to its credit, applies some of the exercises that reveal behavioural biases directly to its own development professionals. It's should come as no surprise, of course, that they exhibit similar biases to everyone else.

One example of “thinking automatically” is a “framing effect” — that is, how a question is framed has a strong influence on the outcome. A typical finding is that that people are loss-averse, meaning that they react differently when a choice is framed in terms of losses than if the same options are offered, but phrased instead in terms of gains. Here's the WDR:

One of the most famous demonstrations of the framing effect was done by Tversky and Kahneman. They posed the threat of an epidemic to students in two different frames, each time offering them two options. In the first frame, respondents could definitely save one-third of the population or take a gamble, where there was a 33 percent chance of saving everyone and a 66 percent chance of saving no one. In the second frame, they could choose between a policy in which two-thirds of the population definitely would die or take a gamble, where there was a 33 percent chance that no one would die and a 66 percent chance that everyone would die. Although the first and second conditions frame outcomes differently — the first in terms of gains, the second in terms of losses — the policy choices are identical. However, the frames affected the choices students made. Presented with the gain frame, respondents chose certainty; presented with a loss frame, they preferred to take their chances. The WDR 2015 team replicated the study with World Bank staff and found the same effect. In the gain frame, 75 percent of World Bank staff respondents chose certainty; in the loss frame, only 34 percent did. Despite the fact that the policy choices are equivalent, how they were framed resulted in drastically different responses.

As another example, the ability to interpret straightforward numerical data diminishes when that data describes a controversial subject. In one devilishly clever experiment, people were divided into two random groups and presented with the same data pattern. However, some were told that the data was about the non-emotive subject of how well a skin cream worked, while others were told that it was about the effectiveness of gun control laws. People were quite accurate at describing the findings of data when it referred to skin cream, but they became much less accurate if the same data were supposed to be describing gun control. The World Bank researchers took this same data. The experts who were given this data as applying to skin cream interpreted in clearly. But the experts who were given the same data as applying to whether a higher minimum wage reduced the poverty rate became much less accurate — apparently because their interpretation was clouded by pre-existing prejudices.

Yet another example focuses on the issue of sunk costs. A problem in many projects, including development projects, is that once a lot of money has been spent there is pressure not to abandon the project, even when it becomes clear that the project is doomed. Here's the scenario from the WDR:

The WDR 2015 team investigated the susceptibility of World Bank staff to sunk cost bias. The surveyed staff were randomly assigned to scenarios in which they assumed the role of task team leader managing a five-year, $500 million land management, conservation, and biodiversity program focusing on the forests of a small country. The program has been active for four years. A new provincial government comes into office and announces a plan to develop hydropower on the main river of the forest, requiring major resettlement. However, the government still wants the original project completed, despite the inconsistency of goals. The difference between the scenarios was the proportion of funds already committed to the project. For example, in one scenario, the staff were told that only 30 percent ($150 million) of the funds had been spent, while in another scenario staff were told that 70 percent ($350 million) of the funds had been spent. Staff saw only one of the four scenarios. World Bank staff were asked whether they would continue the doomed project by committing additional funds. While the exercise was rather simplistic and clearly did not provide all the information necessary to make a decision, it highlighted the differences among groups randomly assigned to different levels of sunk cost. As levels of sunk cost increased, so did the propensity of the staff to continue.

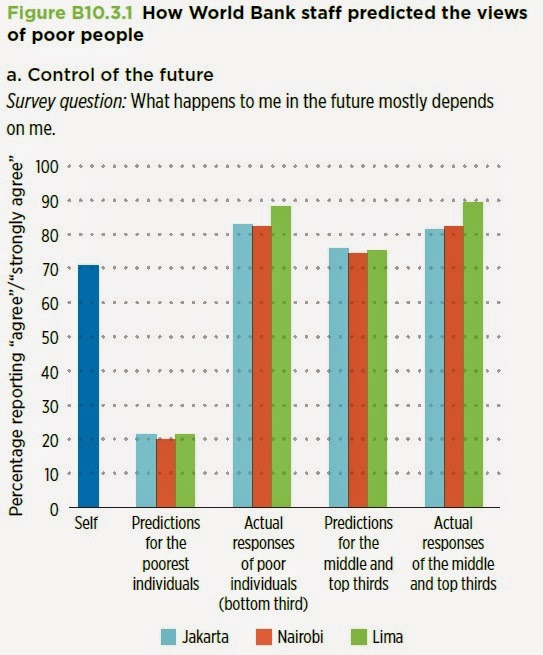

A final example looks at mental models that development experts have of the poor. What do development experts think that the poor believe, and how does it compare to what the poor actually believe? For example, development experts were asked if they thought individuals in low-income countries would agree with the statement: “What happens to me in the future mostly depends on me.” The development experts thought that maybe 20% of the poorest third would agree with this statement, but about 80% actually did. In fact, the share of those agreeing with the statement in the bottom third of the income distribution was much the same as for the upper two thirds and higher than the answer the development experts gave for themselves.

Figure 1: How World Bank staff predicted the views of poor people, question A) Control of the future

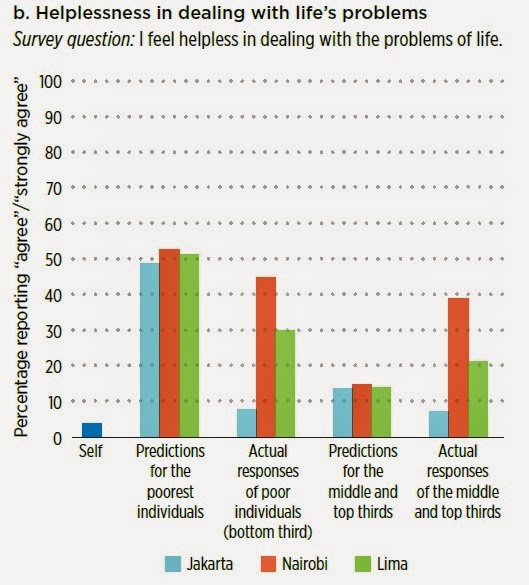

Similarly, development experts thought that about half of those in the bottom third of the income distribution would agree with the statement: “I feel helpless in dealing with the problems of life.” This estimate turns out to be roughly true for Nairobi, an overstatement for Lima, and wildly out of line with beliefs in Jakarta. Presumably, these kinds of answers are important in thinking about how to present and frame development policy.

Figure 2: How World Bank staff predicted the views of poor people, question B) Helplessness in dealing with life’s problems

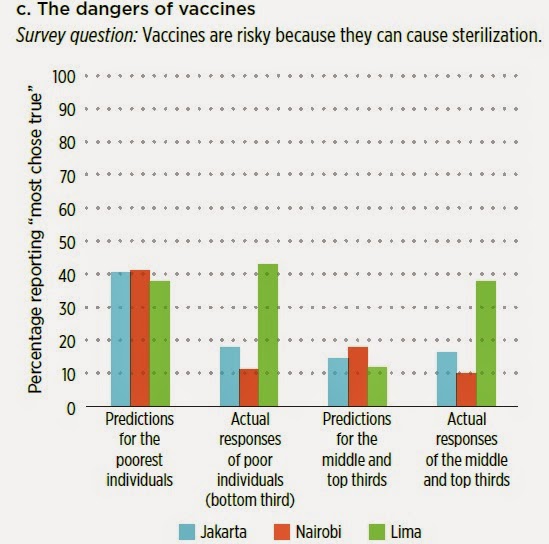

As a final example, the development experts thought that about 40% of the bottom third in the income distribution would agree with the statement: “Vaccines are risky because they can cause sterilization.” It turns out that this is accurate for Lima, but a wild overstatement for Nairobia and Jakarata. Again, such differences of opinions are obviously quite important in designing a public health campaign to encourage more vaccination.

Figure 2: How World Bank staff predicted the views of poor people, question C) The danger of vaccines

The report summarizes the implications of these kinds of studies unflinchingly:

:

Experts, policy makers, and development professionals are also subject to the biases, mental shortcuts (heuristics), and social and cultural influences described elsewhere in this Report. Because the decisions of development professionals often can have large effects on other people’s lives, it is especially important that mechanisms be in place to check and correct for those biases and influences. Dedicated, well-meaning professionals in the field of development — including government policy makers, agency officials, technical consultants, and frontline practitioners in the public, private, and non-profit sectors — can fail to help, or even inadvertently harm, the very people they seek to assist if their choices are subtly and unconsciously influenced by their social environment, the mental models they have of the poor, and the limits of their cognitive bandwidth. They, too, rely on automatic thinking and fall into decision traps. Perhaps the most pressing concern is whether development professionals understand the circumstances in which the beneficiaries of their policies actually live and the beliefs and attitudes that shape their lives..."

The deeper point here is of course not just about the World Bank or development experts, but about all policymakers — and especially policymakers who are seeking to use findings from behavioural economics nudge ordinary citizens to alternative courses of action. Policymakers are subject to thinking automatically, social pressures, and misguided mental models as well. Some of the possible answers involve finding ways to challenge groupthink, perhaps by having a group designated to make the case for the other side, or by finding another way to force a more thorough consideration and discussion of alternatives. Another suggestion is that “development professionals should “eat their own dog food”: that is, they should try to experience first-hand the programs and projects they design.”

The World Bank’s World Development Report 2015 calls on the development community to shift its focus and supports this proposal with findings from IGC research. Read the IGC research referenced in the report here.

This is an edited version of an article that was published on The Conversable Economist.